NVIDIA Tesla P100 Graphic Card - 16 GB HBM2 - Full-height - 900-2H400-0000-000

The World’s First AI Supercomputing Data Center GPU

NVIDIA® Tesla® P100 GPU accelerators are the world's first AI supercomputing data center GPUs. They tap into NVIDIA Pascal™ GPU architecture to deliver a unified platform for accelerating both HPC and AI. With higher performance and fewer, lightning-fast nodes, Tesla P100 enables data centers to dramatically increase throughput while also saving money.

» Click here to view NVIDIA Tesla P100 12GB for PCIe

Exponential Performance Leap with Pascal Architecture

The NVIDIA Pascal architecture enables the Tesla P100 to deliver superior performance for HPC and hyperscale workloads. With more than 21 teraflops of FP16 performance, Pascal is optimized to drive exciting new possibilities in deep learning applications. Pascal also delivers over 5 and 10 teraflops of double and single precision performance for HPC workloads. The Tesla P100 tightly integrates compute and data on the same package by adding CoWoS® (Chip-on-Wafer-on-Substrate) with HBM2 technology to deliver 3X memory performance over the NVIDIA Maxwell™ architecture. This provides a generational leap in time-to-solution for data-intensive applications.

Applications at Massive Scale with NVIDIA NVLink

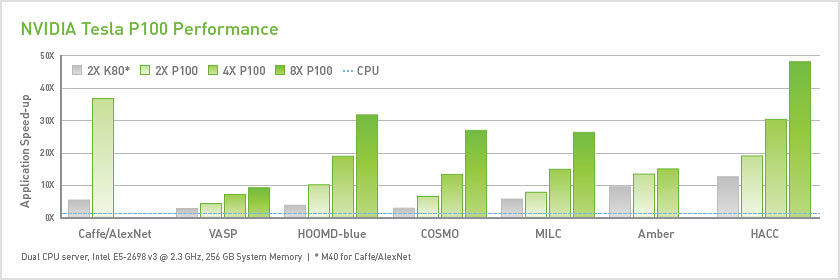

Performance is often throttled by the interconnect. The revolutionary NVIDIA NVLink™ high-speed bidirectional interconnect is designed to scale applications across multiple GPUs by delivering 5X higher performance compared to today's best-in-class technology.

NVIDIA Tesla P100 for Strong-Scale HPC

Tesla P100 with NVIDIA NVLink technology enables lightning-fast nodes to substantially accelerate time-to-solution for strong-scale applications. A server node with NVLink can interconnect up to eight Tesla P100s at 5X the bandwidth of PCIe. It's designed to help solve the world's most important challenges that have infinite compute needs in HPC and deep learning.

» Click here to view the NVIDIA Tesla P100 16GB for SXM2