Exxact TensorEX 4U Deep Learning & AI Server - 2x 3rd Gen Intel Xeon Scalable - TS4-168747704-DPN

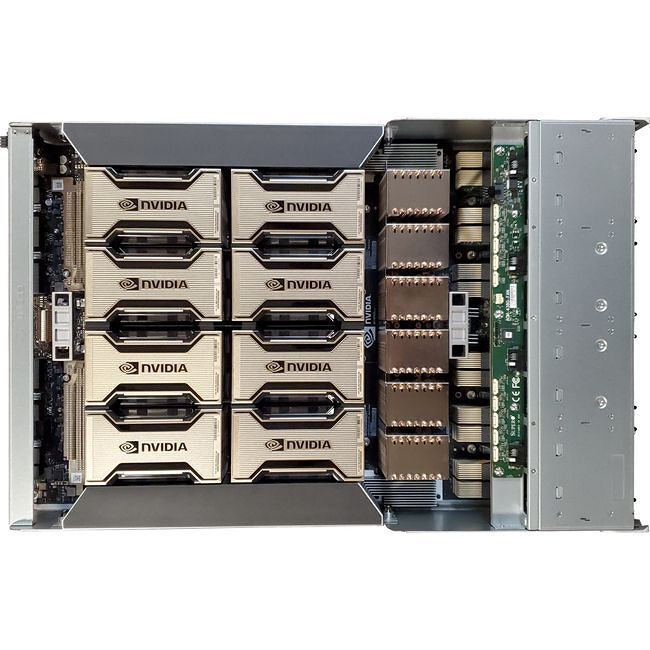

The TensorEX TS4-168747704-DPN is a 4U rack mountable HGX A100 Deep Learning & AI server supporting 2x Intel Xeon Scalable family processors, 32x DDR4 memory slots, and eight A100 Ampere GPUs (SXM4), with up to 600GB/s NVLINK interconnect.

GPUs have provided groundbreaking performance to accelerate deep learning research with thousands of computational cores and up to 100x application throughput when compared to CPUs alone. Exxact has developed the Deep Learning server, featuring NVIDIA GPU technology coupled with state-of-the-art NVLINK GPU-GPU interconnect technology, and a full pre-installed suite of the leading deep learning software, for developers to get a jump-start on deep learning research with the best tools that money can buy.

Features:

- NVIDIA DIGITS software providing powerful design, training, and visualization of deep neural networks for image classification

- Pre-installed standard Ubuntu 18.04/20.04 w/ Exxact Machine Learning Image (EMLI)

- Google TensorFlow software library

- Automatic software update tool included

- A turn-key server with NVLINK GPU-GPU interconnect topology.

An EMLI Environment for Every Developer

Conda EMLI

For developers who want pre-installed deep learning frameworks and their dependencies in separate Python environments installed natively on the system.

Container EMLI

For developers who want pre-installed frameworks utilizing the latest NGC containers, GPU drivers, and libraries in ready to deploy DL environments with the flexibility of containerization.

DIY EMLI

For experienced developers who want a minimalist install to set up their own private deep learning repositories or custom builds of deep learning frameworks.

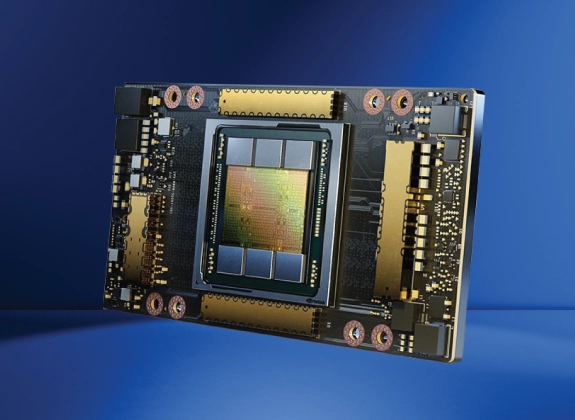

NVIDIA A100 Tensor Core GPU

Blistering Double Precision Accelerator for AI & HPC

NVIDIA A100 introduces double-precision Tensor Cores, providing the most significant milestone since the introduction of double-precision computing in GPUs for HPC. This enables researchers to reduce a 10-hour, double-precision simulation running on NVIDIA V100 Tensor Core GPUs to just four hours on A100.

- Accelerates and enables the most serious HPC and data center workloads

- With 80GBs of High Bandwidth Memory (HBM2e), A100 never skips a beat

A100 SXM4 GPU Options

| Model | Standard Memory | Memory Bandwidth (GB/s) | CUDA Cores | Tensor Cores | Single Precision (TFLOPS) | Double Precision (TFLOPS) | Power (W) | Explore |

|---|---|---|---|---|---|---|---|---|

| A100 80 GB SXM4 | 80 GB HBM2e | 2039 | 6912 | 432 | 19.5 | 9.7 | 400 | --- |

Other Features

Introducing 3rd Gen Intel® Xeon® Scalable Processors

Introducing the 3rd Gen Intel® Xeon® Scalable processors, a balanced architecture that delivers built-in AI acceleration and advanced security capabilities, which allow you to place your workloads more securely where they perform best - from Edge to Cloud.

The 3rd Gen Intel Xeon Scalable processor benefits from decades of innovation for the most common workload requirements, supported by close partnerships and deep integrations with the world’s software leaders. 3rd Gen Intel Xeon Scalable processors are optimized for many workload types and performance levels, all with the consistent, compatible, Intel architecture you know and trust.

Industry Leading Performance

Core-for-core, 3rd Gen Intel® Xeon® Scalable processors offer industry leading performance on popular databases, HPC workloads, virtualization and AI.

The 3rd Gen Intel® Xeon® Scalable processors deliver 1.5X more performance than other CPUs across 20 popular machine and deep learning workloads.

3rd Gen Intel® Xeon® Scalable processors deliver on average up to 62% more performance on a range of broadly-deployed network and 5G workloads over the prior generation, offering users huge performance increases while maintaining the convenience and compatibility of their architecture.

For key AI workloads, 3rd Gen Intel® Xeon® Scalable processors deliver up to74% increase in AI performance on the deep learning topology BERT while maintaining full compatibility.