MPN: TS4-1642659-DPN

Exxact TensorEX 4U 1x Intel Core X-Series CPU - Deep Learning & AI Server - TS4-1642659-DPN

Highlights

Rack Height:

4U

Processor:

1x Intel Core X-Series

Drive Bays:

8x 3.5" Hot-Swap

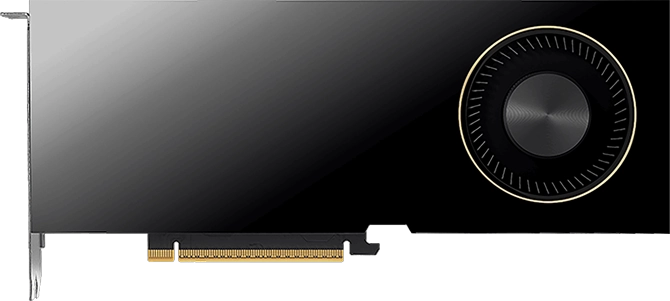

Supports up to 4x Double-Wide cards

About

Specifications

The TensorEX TS4-1642659-DPN is a 4U rack mountable Deep Learning & AI server supporting 1x Intel Core X-Series processor, a maximum of 128 GB DDR4 memory, and up to 4x Double-Wide cards.

An EMLI Environment for Every Developer

Conda EMLI

Separated Frameworks

For developers who want pre-installed deep learning frameworks and their dependencies in separate Python environments installed natively on the system.

Container EMLI

Flexible. Reconfigurable.

For developers who want pre-installed frameworks utilizing the latest NGC containers, GPU drivers, and libraries in ready to deploy DL environments with the flexibility of containerization.

DIY EMLI

Simple. Clean. Custom.

For experienced developers who want a minimalist install to set up their own private deep learning repositories or custom builds of deep learning frameworks.