NVIDIA GTC 2026 Recap Introduction

NVIDIA's GPU Technology Conference returned to San Jose this year with the energy of a company that knows it's at the center of one of the biggest technological shifts in history. Jensen Huang took the stage to cover everything from chip architecture and inference economics to open-source agentic frameworks and physical robotics — a lot of ground for one keynote.

At Exxact, we pay close attention to GTC every year because what NVIDIA announces directly shapes the AI infrastructure landscape our customers are building on. This recap breaks down the highlights and what they mean for organizations investing in AI today.

CUDA Turns 20. The Foundation Behind NVIDIA's Platform

CUDA, NVIDIA's parallel computing platform, turned 20 this year. Jensen used the anniversary as a framing device for the rest of the keynote, most of what NVIDIA has built, and continues to build, traces back to this foundation.

A few things worth noting about where CUDA stands today:

- Installed base: Hundreds of millions of CUDA-capable GPUs are deployed across every major cloud provider, OEM, and industry vertical.

- Backward compatibility: NVIDIA continues to optimize its software stack across older architectures, meaning deployed hardware gains performance improvements over time without replacement.

- Ecosystem scale: Thousands of libraries, frameworks, and tools are built on CUDA, with hundreds of thousands of public projects dependent on it.

- Library downloads are growing faster than ever, reflecting the expanding range of workloads CUDA now supports, from traditional HPC to AI training, inference, and data processing.

The core point Jensen made is that the value of NVIDIA's platform isn't just the hardware, it's the compounding effect of two decades of software, tooling, and developer adoption built on top of it. That installed base is why workloads running on older NVIDIA hardware, like Ampere, are still seeing rising cloud pricing despite being several generations old.

The Inference Inflection Point

For most of NVIDIA's history, training the model was the dominant and focus in AI workload. As of recently, that has shifted. Jensen made the case that inference is now the primary driver of compute demand and that the economics of AI businesses are increasingly defined by how efficiently they can generate tokens. The more tokens you can hold in memory, the faster you can process these tokens, and the faster you can generate tokens, the smarter the AI.

Three developments over the past two years drove this shift:

- Generative AI (2022–2023): Models moved from perception and classification to content generation, fundamentally changing how compute is consumed.

- Reasoning models (2024): Models like OpenAI's o1 and o3 introduced chain-of-thought processing — the model "thinks" before responding, which significantly increases both input and output token usage per query.

- Agentic AI (2024–2025): Tools like Claude Code enabled models to autonomously read files, write and test code, and iterate on tasks. This moved AI from answering questions to completing multi-step work, multiplying compute demand further.

Source: NVIDIA

The cumulative effect: Jensen estimated that compute demand per AI workload has increased roughly 10,000x over two years, while overall usage has grown approximately 100x. This implying total compute demand on the order of 1 million times greater than two years ago.

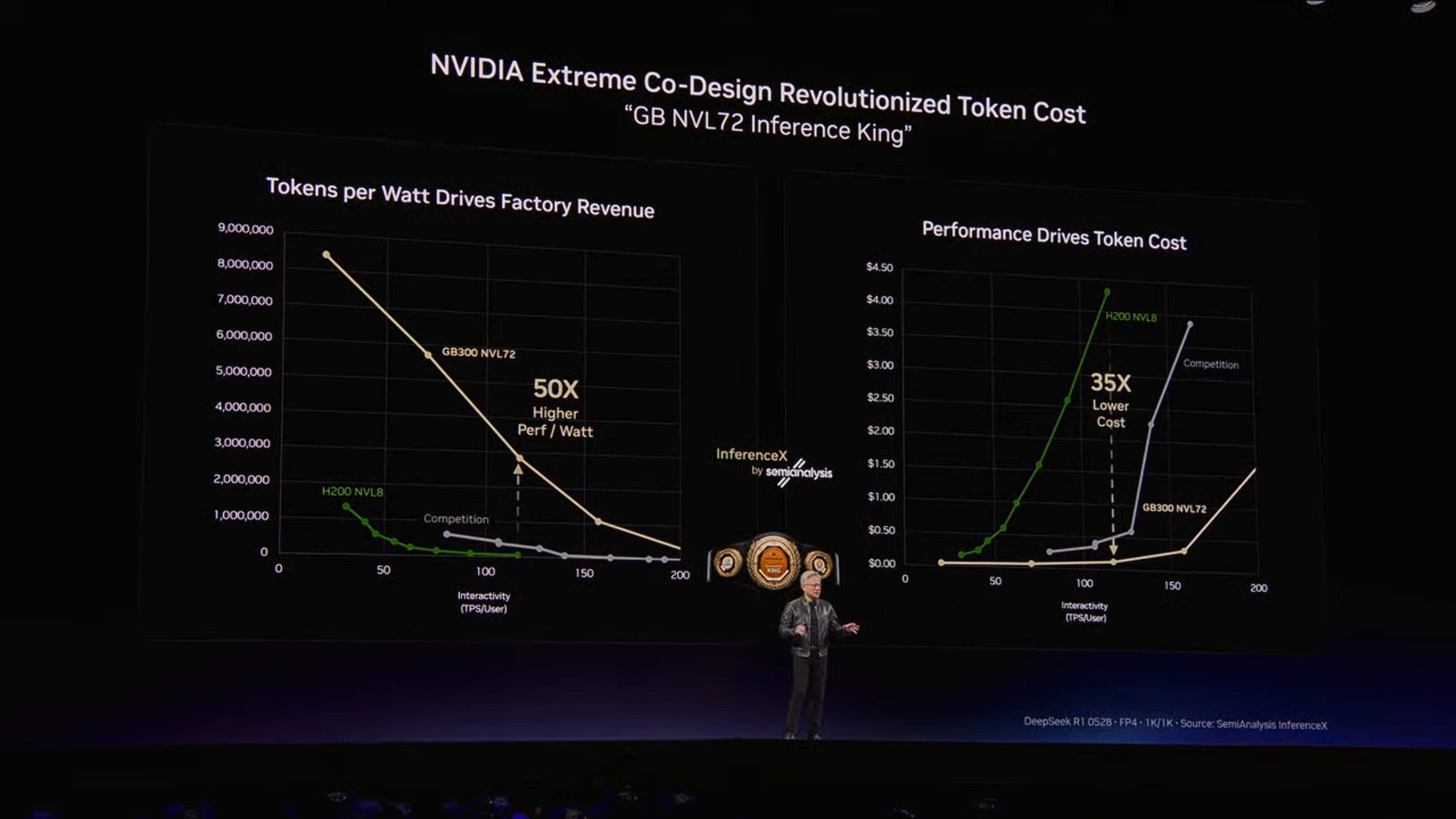

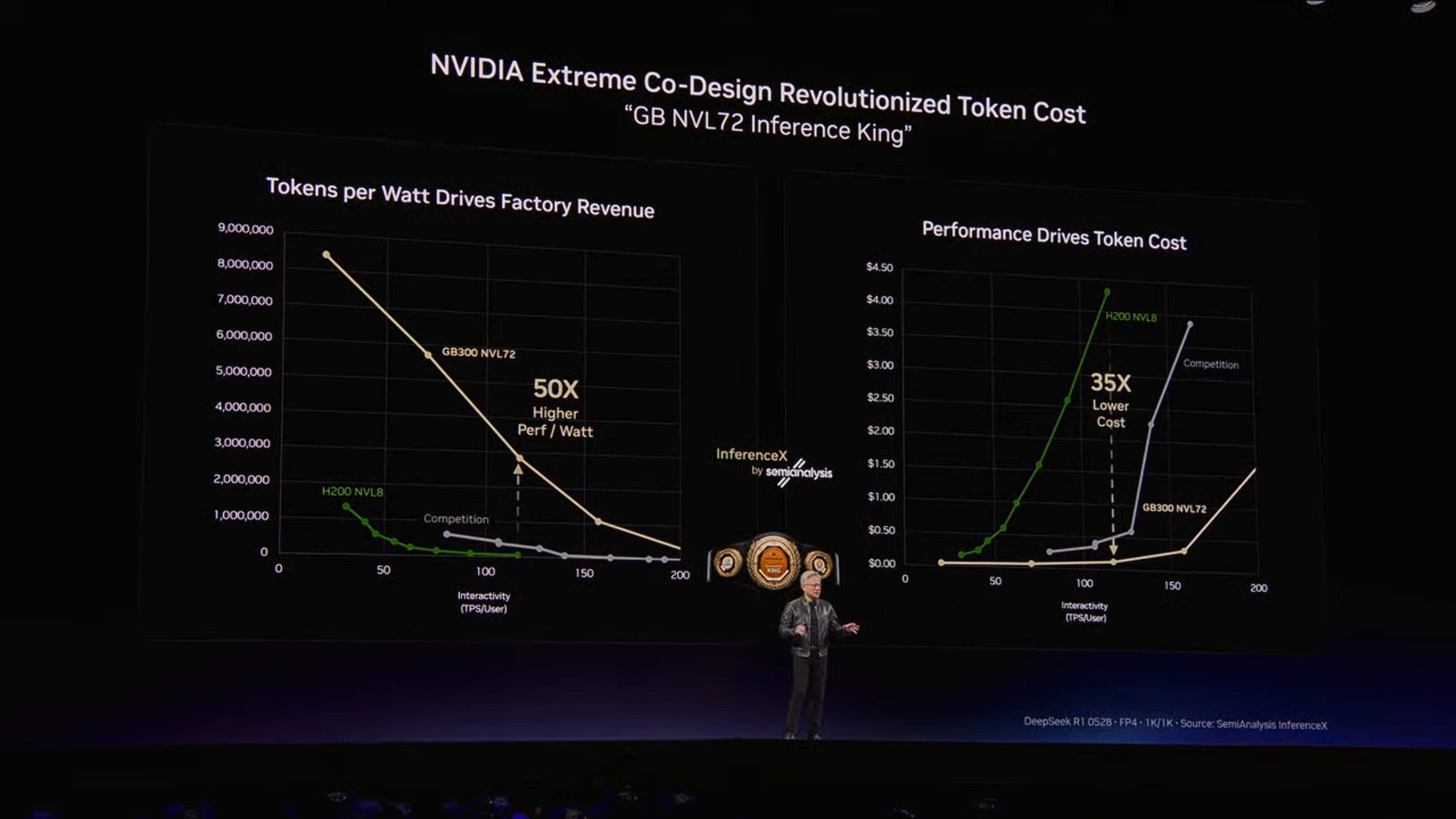

Jensen framed this shift with a concept worth understanding: the AI data center as a token factory.

- Every data center has a fixed power ceiling; once built, that constraint doesn't change.

- The relevant metric for an AI operator is therefore tokens per watt or how much useful AI output you can generate from your available power budget.

- A second axis matters equally: token speed (latency per inference), which determines what tiers of service you can offer and at what price points.

- Higher token speed enables larger models, longer context windows, and more reasoning, all of which command higher pricing.

This framing has direct implications for infrastructure decisions. The architecture you deploy determines your token throughput and token speed at a fixed power envelope which maps directly to revenue potential. Jensen's point was that this is how AI infrastructure ROI should be evaluated going forward, not just raw compute specs.

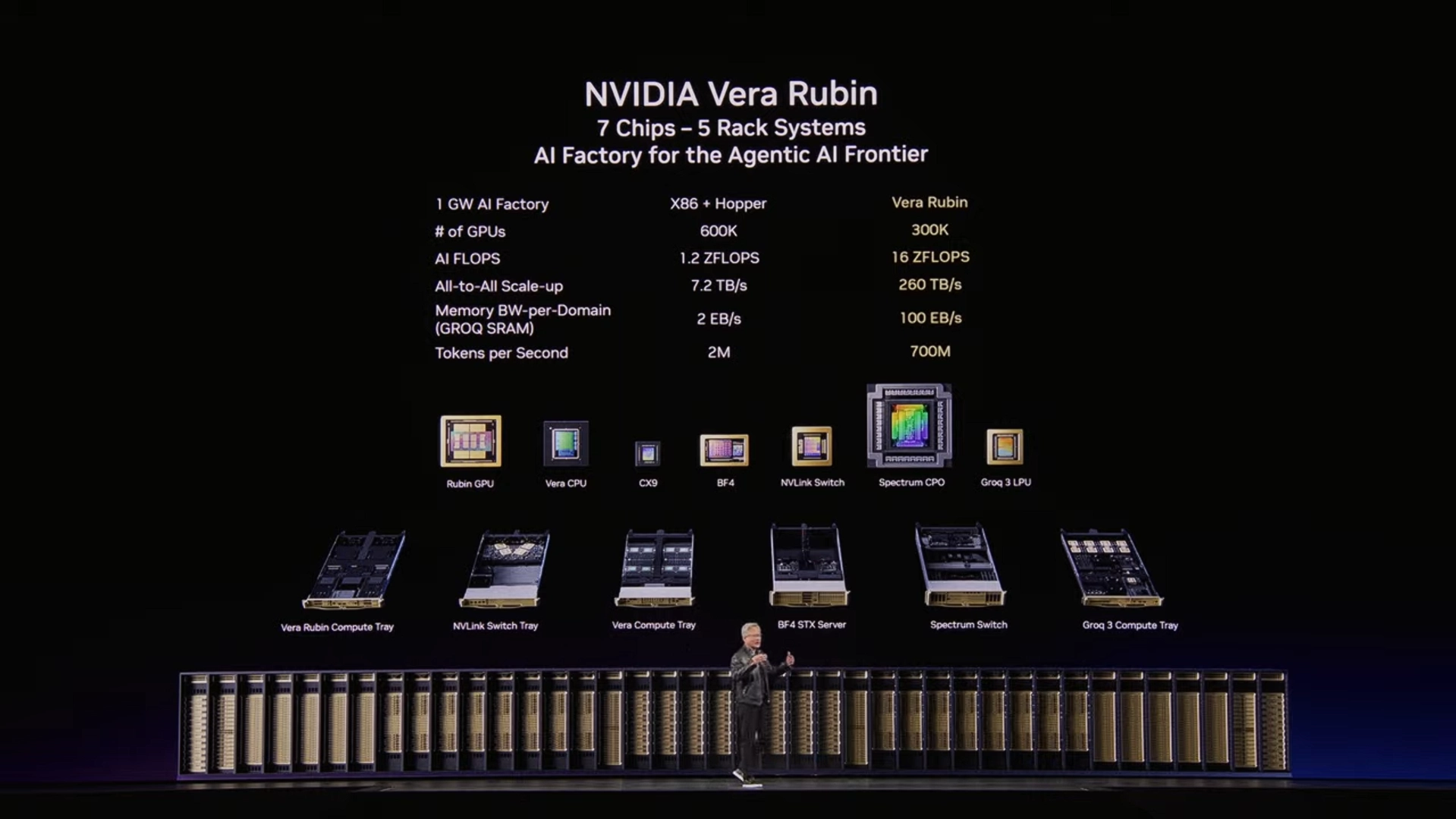

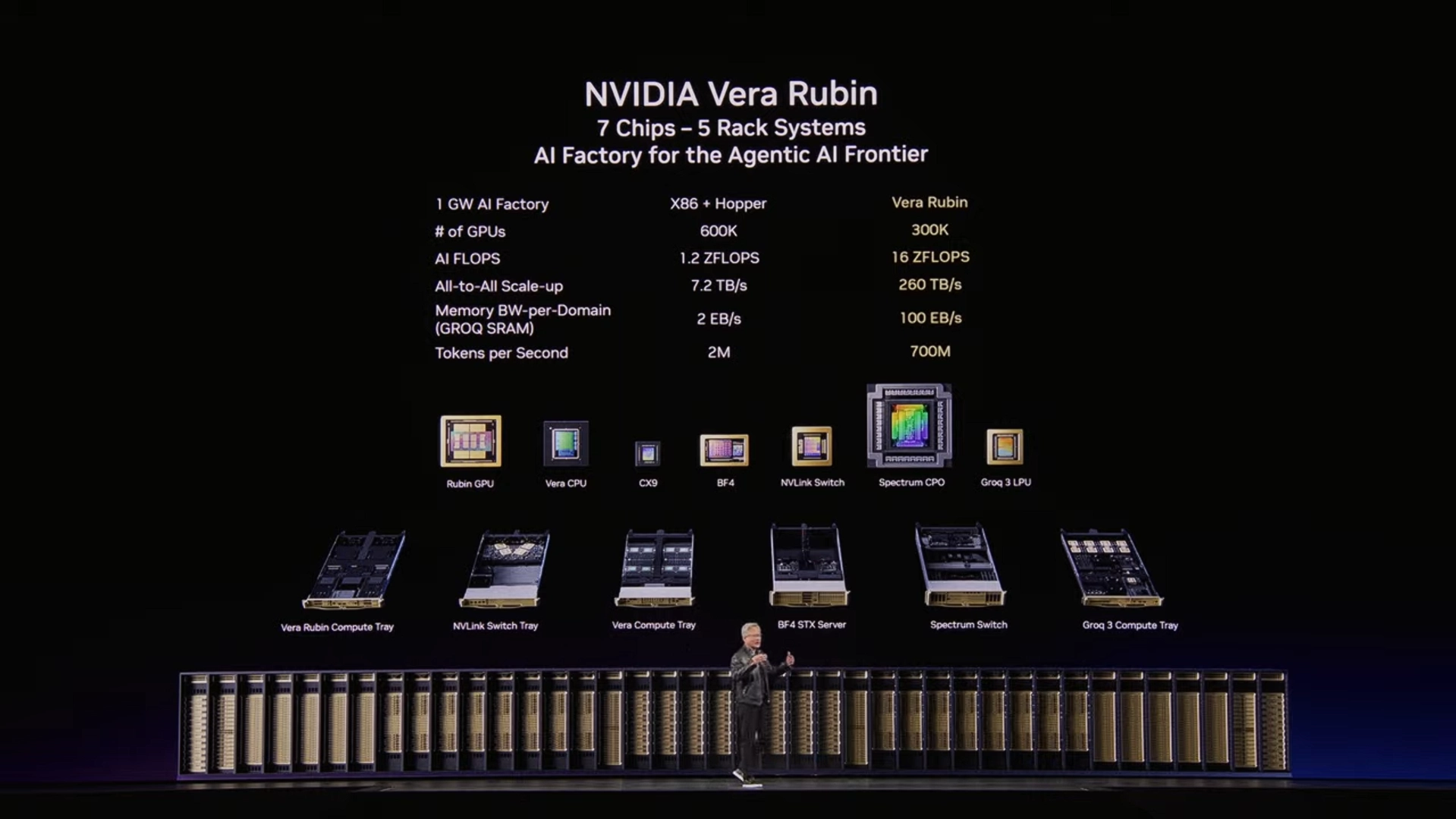

Vera Rubin: NVIDIA's Next-Generation AI Platform

Blackwell is currently in full production and shipping at scale, but NVIDIA used GTC to formally introduce Vera Rubin, the next-generation platform architected around the demands of agentic AI workloads. The first Vera Rubin rack is already operational at Microsoft Azure.

NVIDIA Vera Rubin NVL72

Vera Rubin is designed as a full-system platform, not just a new GPU. Key specs:

- 3.6 exaflops of compute per rack

- 260 TB/s all-to-all NVLink bandwidth

- 72 GPUs connected via sixth-generation NVLink

- Fully liquid-cooled, including NVLink switching infrastructure

- Hot water cooling at 45°C, reducing data center cooling overhead

NVIDIA Groq 3 LPX

One of the more significant announcements was NVIDIA's acquisition of the Groq chip team and integration of the Groq 3 LPX into the Vera Rubin platform. The two chips serve fundamentally different roles:

- Vera Rubin GPU: High memory capacity (288GB per chip), optimized for throughput-heavy workloads and KV cache storage

- Groq 3 LPX: Massive on-chip SRAM, statically compiled, deterministic data flow optimized for low-latency token generation (decode)

NVIDIA's inference orchestration software, Dynamo, splits the workload between them: Vera Rubin handles the prefill and attention portions of inference, while Groq handles the token generation (feedforward decode) stage. The result is 35x more throughput per megawatt compared to running Vera Rubin alone for latency-sensitive workloads.

NVIDIA Rubin Ultra

Beyond the standard Vera Rubin configuration, NVIDIA also announced Rubin Ultra, a higher-density variant using a new rack design called Kyber:

- Connects 144 GPUs in a single NVLink domain (vs. 72 in standard Vera Rubin)

- Compute nodes slide in vertically and connect via a midplane backplane rather than copper cabling

- Enables NVLink scaling beyond what copper interconnects can support

These two metric, throughput and token speed, map directly to the revenue potential of a given infrastructure deployment. NVIDIA presented generation-over-generation comparisons at a fixed 1 gigawatt power envelope:

- Blackwell delivered a substantial improvement over Hopper, and Vera Rubin is projected to deliver approximately 5x more revenue potential per gigawatt over Blackwell

- The architecture you deploy determines your output capacity at a fixed power budget, which sets a ceiling on what you can generate from that 1 gigawatt limit.

Accelerate AI Training an NVIDIA DGX

Training AI models on massive datasets can be accelerated exponentially with the right system. It's not just a high-performance computer, but a tool to propel and accelerate your research. Deploy multiple NVIDIA DGX nodes for increased scalability. DGX B200 and DGX B300 is available today!

Get a Quote Today

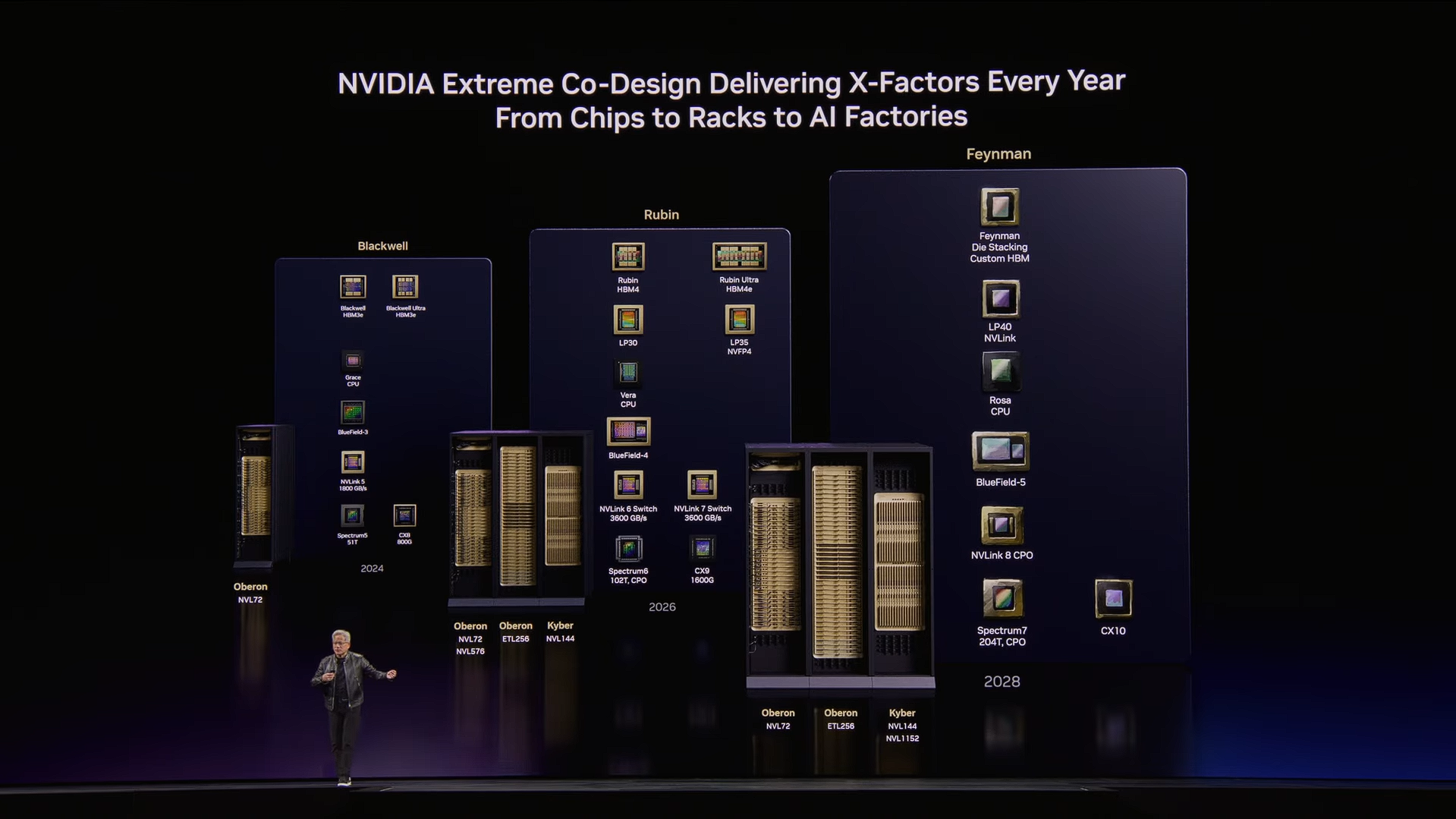

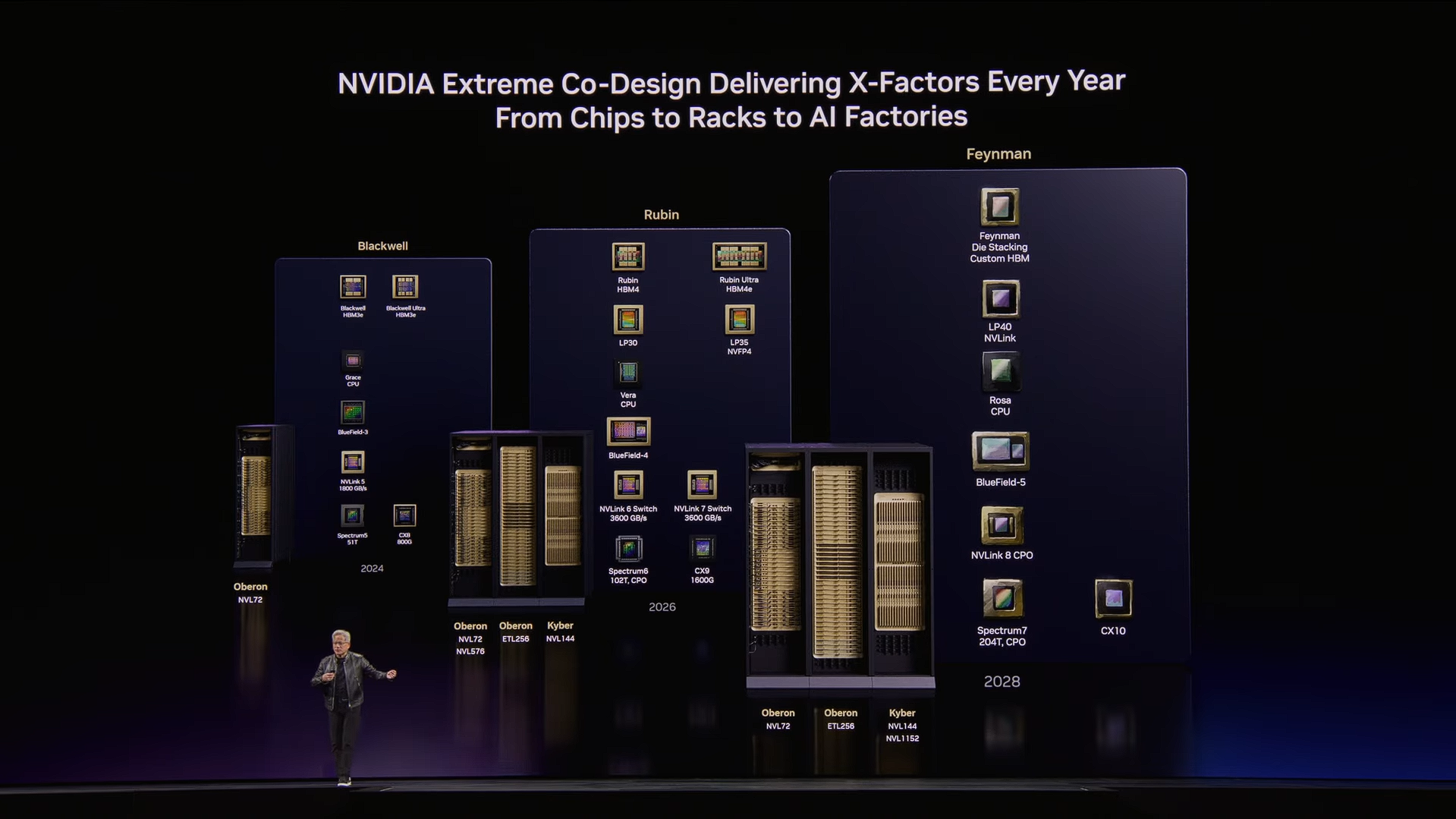

Vera Rubin (current/near-term)

Two configurations will be available:

- Standard Vera Rubin (Kyber rack): NVLink 144 with copper Scale-up

- Vera Rubin with Oberon: NVLink 72 plus optical Scale-up, expandable to NVLink 576 via co-packaged optics and Spectrum 6 switching

Both are in production. NVIDIA also noted the Vera CPU is already selling as a standalone product with high interest.

Rubin Ultra (next generation)

- New Kyber rack design supporting 144 GPUs in a single NVLink domain

- Introduces the Groq LP 35 chip, which will incorporate NVIDIA's MVRP compute structure for additional performance gains

- Co-packaged optics available for both Scale-up and Scale-out networking

Feynman (future generation)

- New GPU architecture paired with the Groq LP 40

- New CPU called Rosa (Rosalind), paired with BlueField 5 and ConnectX-10

- Both copper and co-packaged optical Scale-up supported

- Continues the annual architecture cadence NVIDIA has committed to

Key components shipping across generations

- Vera CPU: Designed for high single-threaded performance and data processing workloads, using LPDDR5 memory for efficiency. Targeted at agentic orchestration and tool-use workloads

- BlueField 4: New storage and networking DPU, with 100% of major storage vendors committed to the platform

- Spectrum X with co-packaged optics: World's first CPO Ethernet switch, in full production

- ConnectX 9: Updated networking paired with Vera CPU

NVIDIA's broader point on the roadmap is that the platform is designed for continuity. Each generation maintains backward compatibility, and the shift from copper to optical interconnects will happen incrementally across both Scale-up and Scale-out rather than as a hard cutover.

AI Factories and Industry Adoption

A recurring theme throughout the keynote was NVIDIA's expansion beyond chips and systems into the infrastructure layer that surrounds them. Two areas reflect this most clearly: the tooling NVIDIA has built for designing and operating AI factories, and the breadth of industries now building on NVIDIA platforms.

NVIDIA DSX: Digital Twin Platform for AI Factories

As AI factories grow in scale and complexity, NVIDIA introduced DSX, an Omniverse-based digital twin platform built for designing, commissioning, and operating large-scale AI infrastructure. The core idea is that the various technology vendors involved in building a data center, cooling, power, networking, compute, need a shared environment to co-design before anything is physically built.

DSX covers the full facility lifecycle:

- Design and simulation: Thermal, electrical, mechanical, and network simulation using tools from partners including Siemens, Cadence, and Dassault Systemes

- Virtual commissioning: Facilities can be validated digitally through Procore before construction begins, reducing delays

- Live operations: Once a facility is running, the digital twin becomes an operational tool. AI agents monitor cooling, electrical systems, and grid signals in real time, feeding into NVIDIA's Max-Q system which dynamically adjusts compute workloads to maximize token throughput within power constraints

The underlying argument is that at gigawatt scale, inefficiencies in how a facility is designed and operated translate directly to lost token output and lost revenue. NVIDIA estimates there is roughly a factor of two in recoverable efficiency across a typical AI factory deployment.

Industry Verticals

Jensen covered the range of industries now building on NVIDIA infrastructure, with each relying on domain-specific Cuda-X libraries rather than general-purpose compute alone:

- Financial services: The largest represented industry at GTC this year. Algorithmic trading is shifting from classical quantitative methods to deep learning models that discover patterns across large datasets without human feature engineering

- Healthcare: Drug discovery, diagnostic AI agents, and physical AI robotics for clinical settings. NVIDIA cited this as going through its "ChatGPT moment" in terms of adoption velocity

- Automotive: Self-driving and robotaxi platforms, covered in more detail in the robotics section below

- Telecom: NVIDIA's Aerial platform enables AI-RAN, converting traditional base stations into AI inference infrastructure at the edge. Active partnerships with Nokia and T-Mobile

- Industrial and manufacturing: Simulation-driven robot deployment for factory automation, with integration across major industrial automation vendors

- Media and entertainment: Real-time AI for broadcast, live translation, and gaming, built on NVIDIA's Holoscan platform

- Retail and CPG: Supply chain optimization and AI agents for customer support across a $35 trillion industry

- Quantum computing: 35 companies at GTC building hybrid GPU-quantum systems using NVIDIA's cuQuantum platform

The breadth here reflects a consistent pattern in how NVIDIA approaches verticals. Rather than positioning GPUs as general-purpose accelerators, each domain gets purpose-built libraries, Cuda-X, that solve specific algorithmic problems in that field. Jensen described these libraries as the core of what differentiates NVIDIA's platform long term.

OpenClaw and the Agentic AI Framework

One of the more significant announcements at GTC was NVIDIA's support for OpenClaw, an open-source agentic AI framework that has seen unusually rapid adoption since its release. Jensen described it as the fastest adopted open-source project in history, surpassing Linux's 30-year install base within weeks.

What is OpenClaw?

OpenClaw functions as an operating system for AI agents. The components map closely to what a traditional OS provides:

- Resource management across file systems, tools, and language models

- Task scheduling and cron job execution

- Problem decomposition: breaking a prompt into sequential steps

- Sub-agent spawning for parallel workstreams

- Multimodal I/O including text, voice, and messaging

The practical result is that anyone can spin up a functioning AI agent by running a single command. The agent connects to a language model, receives a task, and executes it autonomously across tools and systems.

The capability that makes OpenClaw useful also creates security risk in a corporate environment. An agentic system with access to internal infrastructure can read sensitive data, execute code, and communicate externally. NVIDIA worked with OpenClaw's author to address this with an enterprise-ready reference design called NeMo Claw, which adds:

- A policy engine for governing agent behavior

- Network guardrails

- A privacy router to prevent unauthorized data exfiltration

- Integration hooks for existing enterprise SaaS policy systems

NeMo Claw is available to download and deploy and is designed to connect with the policy engines that enterprise software vendors already maintain.

Jensen's framing was that OpenClaw represents the same kind of inflection point for enterprise software that Linux, HTML, and Kubernetes each represented in prior computing eras. The argument is straightforward: just as every company eventually needed a Linux strategy, a web strategy, and a cloud-native strategy, every company now needs an agentic AI strategy.

Open Frontier Models for Every Industry

Alongside its hardware and infrastructure announcements, NVIDIA outlined its position as a contributor to open AI models. Rather than a single general-purpose model, NVIDIA is developing and releasing six domain-specific model families, each targeting a different field.

- Nemotron: Language models covering reasoning, visual understanding, retrieval-augmented generation, safety, and speech. Nemotron-3 Ultra is positioned as a base model for fine-tuning and sovereign AI deployments. Nemotron-4 is in active development

- Cosmos: World foundation models for physical AI, focused on generating and understanding synthetic environments for robotics and autonomous systems training

- Alpamayo: Described as the world's first reasoning-capable autonomous vehicle AI. Handles real-time decision making, narration of driving actions, and instruction following

- Groot: Foundation models for general-purpose humanoid and industrial robotics, covering whole-body control, manipulation, and policy generation

- BioNeMo: Open models for biology, chemistry, and molecular design, targeting drug discovery and life sciences research

- Earth2: Weather and climate forecasting models grounded in AI physics simulation

Each family is being actively maintained and updated. NVIDIA framed the ongoing investment in these models, rather than the models themselves, as the core value proposition for organizations building on them.

A thread running through this section of the keynote was sovereign AI, the ability for individual countries and organizations to build and run their own models rather than depending on a small number of large external providers. NVIDIA's open model initiative, combined with its on-premises infrastructure options, is positioned as the technical foundation for that capability.

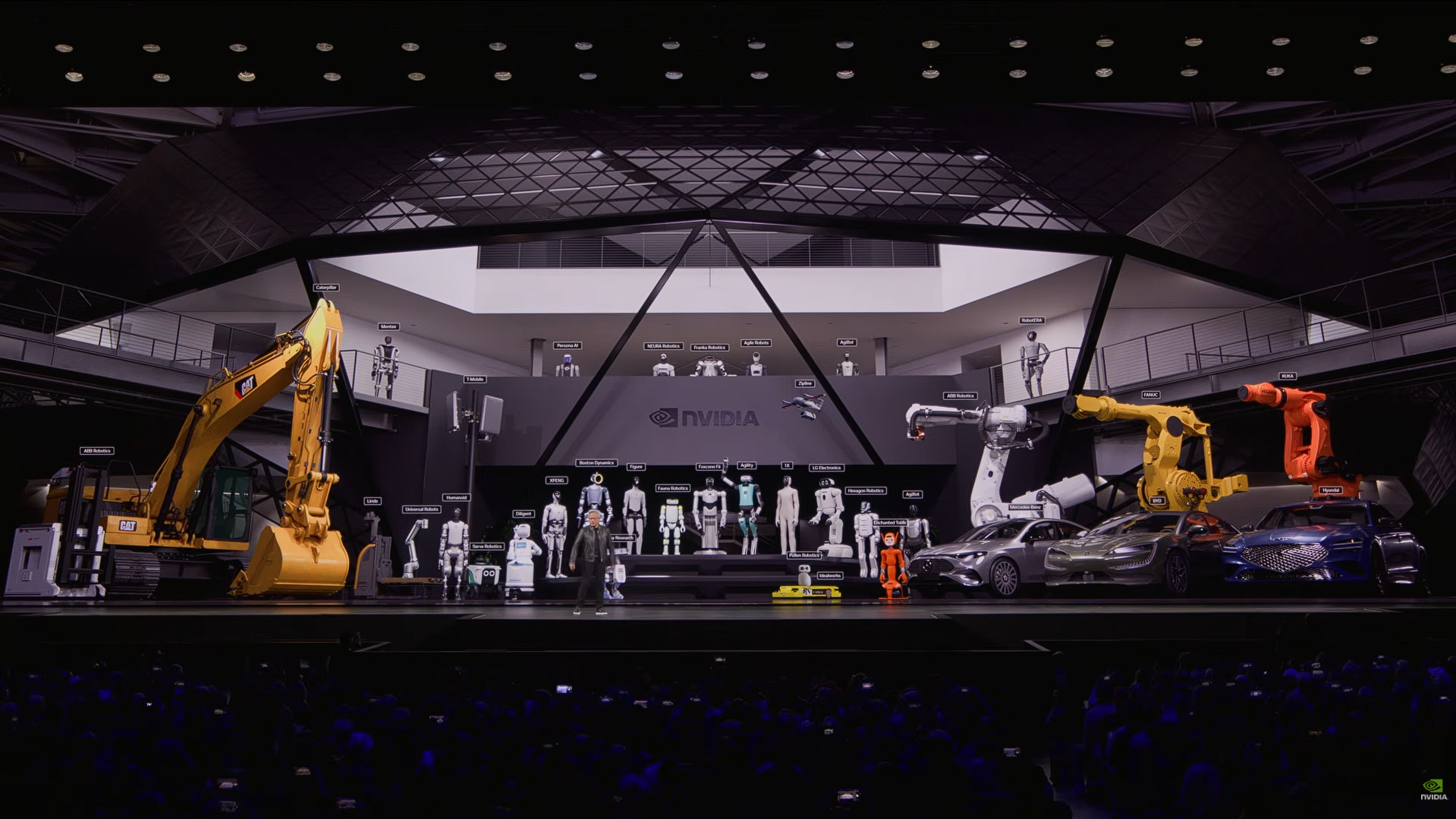

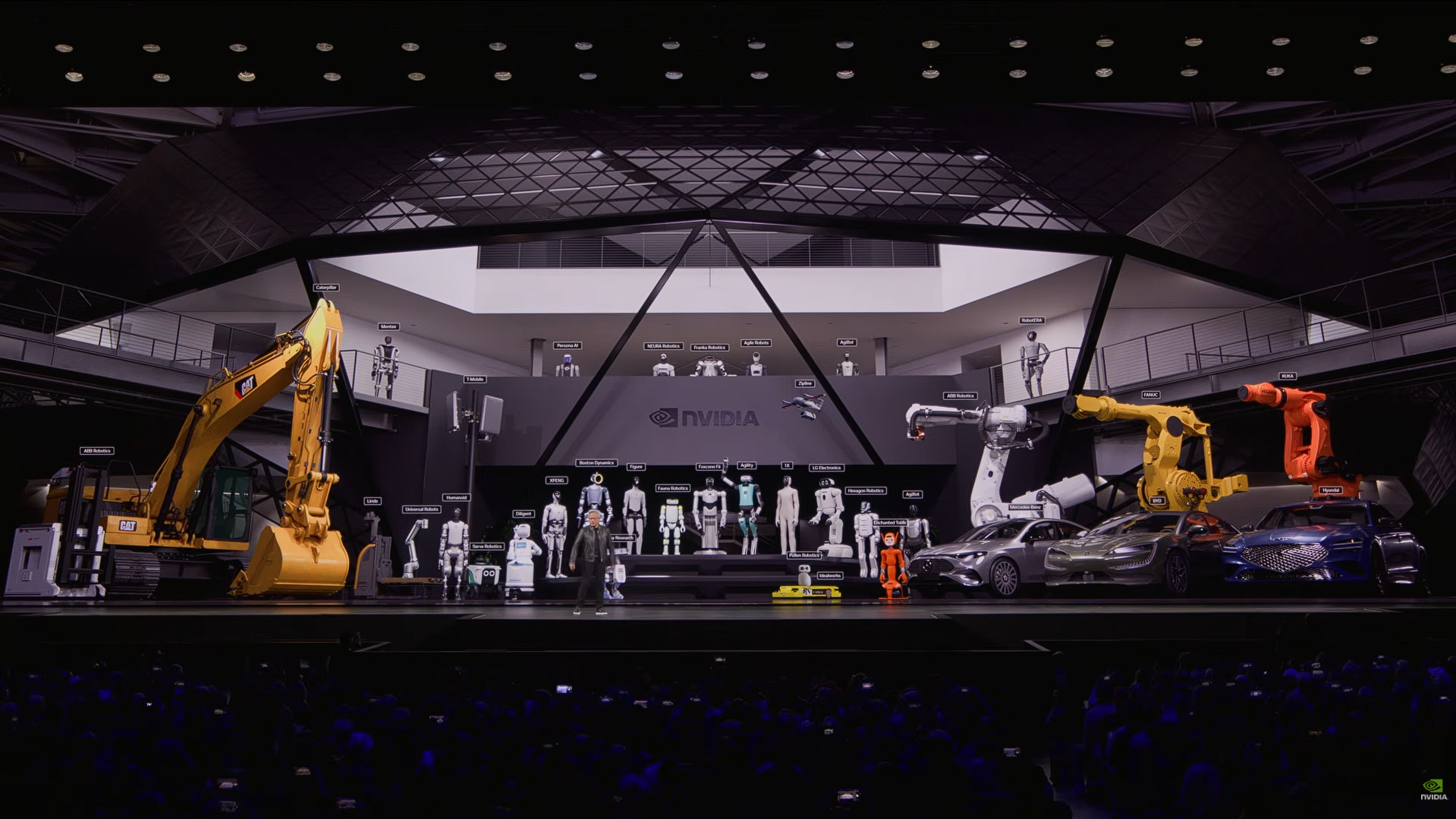

Robotics and Physical AI

Jensen dedicated a significant portion of the keynote to robotics, framing it as the next major frontier for AI after language and reasoning models. The core argument is that the same capabilities that enabled agentic AI in software, perception, reasoning, and action, now need to be extended to systems operating in the physical world.

NVIDIA provides three distinct computers for robotics development:

- Training computer: For developing and training robot AI models at scale via DGX Rubin

- Simulation computer: For synthetic data generation using Isaac Lab, Newton physics simulation, and Cosmos world models via MGX and RTX PRO.

- Edge computer: The onboard compute that runs inside the robot itself via Jetson and Thor.

The simulation layer is particularly important because real-world data collection alone is insufficient to train robots across the full range of edge cases they will encounter in deployment.

AI Infrastructure Planning for the Inference Era

GTC 2026 reinforced that the shift from training to inference as the dominant AI workload has real implications for how infrastructure should be evaluated. Tokens per watt and token speed at a fixed power budget are increasingly the metrics that determine what a deployment can deliver. That framing should inform hardware decisions being made today.

NVIDIA Blackwell is the right platform to deploy now. It is in full production, the software stack is mature, and the ecosystem is well established. Vera Rubin is sampling well with broad availability ahead, and the Rubin Ultra to Feynman roadmap signals that NVIDIA's annual architecture cadence is not slowing down. Planning around that cadence, rather than waiting for it, is the more practical approach for most deployment timelines.

As an NVIDIA Elite Partner and solution integrator, Exxact Corporation works with organizations navigating these infrastructure decisions. From GPU servers and workstations to full rack-scale deployments, our team can help identify the right configuration for your workload and budget.

NVIDIA GTC 2026 Recap - Focusing on The Era of Tokens & Inference

NVIDIA GTC 2026 Recap Introduction

NVIDIA's GPU Technology Conference returned to San Jose this year with the energy of a company that knows it's at the center of one of the biggest technological shifts in history. Jensen Huang took the stage to cover everything from chip architecture and inference economics to open-source agentic frameworks and physical robotics — a lot of ground for one keynote.

At Exxact, we pay close attention to GTC every year because what NVIDIA announces directly shapes the AI infrastructure landscape our customers are building on. This recap breaks down the highlights and what they mean for organizations investing in AI today.

CUDA Turns 20. The Foundation Behind NVIDIA's Platform

CUDA, NVIDIA's parallel computing platform, turned 20 this year. Jensen used the anniversary as a framing device for the rest of the keynote, most of what NVIDIA has built, and continues to build, traces back to this foundation.

A few things worth noting about where CUDA stands today:

- Installed base: Hundreds of millions of CUDA-capable GPUs are deployed across every major cloud provider, OEM, and industry vertical.

- Backward compatibility: NVIDIA continues to optimize its software stack across older architectures, meaning deployed hardware gains performance improvements over time without replacement.

- Ecosystem scale: Thousands of libraries, frameworks, and tools are built on CUDA, with hundreds of thousands of public projects dependent on it.

- Library downloads are growing faster than ever, reflecting the expanding range of workloads CUDA now supports, from traditional HPC to AI training, inference, and data processing.

The core point Jensen made is that the value of NVIDIA's platform isn't just the hardware, it's the compounding effect of two decades of software, tooling, and developer adoption built on top of it. That installed base is why workloads running on older NVIDIA hardware, like Ampere, are still seeing rising cloud pricing despite being several generations old.

The Inference Inflection Point

For most of NVIDIA's history, training the model was the dominant and focus in AI workload. As of recently, that has shifted. Jensen made the case that inference is now the primary driver of compute demand and that the economics of AI businesses are increasingly defined by how efficiently they can generate tokens. The more tokens you can hold in memory, the faster you can process these tokens, and the faster you can generate tokens, the smarter the AI.

Three developments over the past two years drove this shift:

- Generative AI (2022–2023): Models moved from perception and classification to content generation, fundamentally changing how compute is consumed.

- Reasoning models (2024): Models like OpenAI's o1 and o3 introduced chain-of-thought processing — the model "thinks" before responding, which significantly increases both input and output token usage per query.

- Agentic AI (2024–2025): Tools like Claude Code enabled models to autonomously read files, write and test code, and iterate on tasks. This moved AI from answering questions to completing multi-step work, multiplying compute demand further.

Source: NVIDIA

The cumulative effect: Jensen estimated that compute demand per AI workload has increased roughly 10,000x over two years, while overall usage has grown approximately 100x. This implying total compute demand on the order of 1 million times greater than two years ago.

Jensen framed this shift with a concept worth understanding: the AI data center as a token factory.

- Every data center has a fixed power ceiling; once built, that constraint doesn't change.

- The relevant metric for an AI operator is therefore tokens per watt or how much useful AI output you can generate from your available power budget.

- A second axis matters equally: token speed (latency per inference), which determines what tiers of service you can offer and at what price points.

- Higher token speed enables larger models, longer context windows, and more reasoning, all of which command higher pricing.

This framing has direct implications for infrastructure decisions. The architecture you deploy determines your token throughput and token speed at a fixed power envelope which maps directly to revenue potential. Jensen's point was that this is how AI infrastructure ROI should be evaluated going forward, not just raw compute specs.

Vera Rubin: NVIDIA's Next-Generation AI Platform

Blackwell is currently in full production and shipping at scale, but NVIDIA used GTC to formally introduce Vera Rubin, the next-generation platform architected around the demands of agentic AI workloads. The first Vera Rubin rack is already operational at Microsoft Azure.

NVIDIA Vera Rubin NVL72

Vera Rubin is designed as a full-system platform, not just a new GPU. Key specs:

- 3.6 exaflops of compute per rack

- 260 TB/s all-to-all NVLink bandwidth

- 72 GPUs connected via sixth-generation NVLink

- Fully liquid-cooled, including NVLink switching infrastructure

- Hot water cooling at 45°C, reducing data center cooling overhead

NVIDIA Groq 3 LPX

One of the more significant announcements was NVIDIA's acquisition of the Groq chip team and integration of the Groq 3 LPX into the Vera Rubin platform. The two chips serve fundamentally different roles:

- Vera Rubin GPU: High memory capacity (288GB per chip), optimized for throughput-heavy workloads and KV cache storage

- Groq 3 LPX: Massive on-chip SRAM, statically compiled, deterministic data flow optimized for low-latency token generation (decode)

NVIDIA's inference orchestration software, Dynamo, splits the workload between them: Vera Rubin handles the prefill and attention portions of inference, while Groq handles the token generation (feedforward decode) stage. The result is 35x more throughput per megawatt compared to running Vera Rubin alone for latency-sensitive workloads.

NVIDIA Rubin Ultra

Beyond the standard Vera Rubin configuration, NVIDIA also announced Rubin Ultra, a higher-density variant using a new rack design called Kyber:

- Connects 144 GPUs in a single NVLink domain (vs. 72 in standard Vera Rubin)

- Compute nodes slide in vertically and connect via a midplane backplane rather than copper cabling

- Enables NVLink scaling beyond what copper interconnects can support

These two metric, throughput and token speed, map directly to the revenue potential of a given infrastructure deployment. NVIDIA presented generation-over-generation comparisons at a fixed 1 gigawatt power envelope:

- Blackwell delivered a substantial improvement over Hopper, and Vera Rubin is projected to deliver approximately 5x more revenue potential per gigawatt over Blackwell

- The architecture you deploy determines your output capacity at a fixed power budget, which sets a ceiling on what you can generate from that 1 gigawatt limit.

Accelerate AI Training an NVIDIA DGX

Training AI models on massive datasets can be accelerated exponentially with the right system. It's not just a high-performance computer, but a tool to propel and accelerate your research. Deploy multiple NVIDIA DGX nodes for increased scalability. DGX B200 and DGX B300 is available today!

Get a Quote Today

Vera Rubin (current/near-term)

Two configurations will be available:

- Standard Vera Rubin (Kyber rack): NVLink 144 with copper Scale-up

- Vera Rubin with Oberon: NVLink 72 plus optical Scale-up, expandable to NVLink 576 via co-packaged optics and Spectrum 6 switching

Both are in production. NVIDIA also noted the Vera CPU is already selling as a standalone product with high interest.

Rubin Ultra (next generation)

- New Kyber rack design supporting 144 GPUs in a single NVLink domain

- Introduces the Groq LP 35 chip, which will incorporate NVIDIA's MVRP compute structure for additional performance gains

- Co-packaged optics available for both Scale-up and Scale-out networking

Feynman (future generation)

- New GPU architecture paired with the Groq LP 40

- New CPU called Rosa (Rosalind), paired with BlueField 5 and ConnectX-10

- Both copper and co-packaged optical Scale-up supported

- Continues the annual architecture cadence NVIDIA has committed to

Key components shipping across generations

- Vera CPU: Designed for high single-threaded performance and data processing workloads, using LPDDR5 memory for efficiency. Targeted at agentic orchestration and tool-use workloads

- BlueField 4: New storage and networking DPU, with 100% of major storage vendors committed to the platform

- Spectrum X with co-packaged optics: World's first CPO Ethernet switch, in full production

- ConnectX 9: Updated networking paired with Vera CPU

NVIDIA's broader point on the roadmap is that the platform is designed for continuity. Each generation maintains backward compatibility, and the shift from copper to optical interconnects will happen incrementally across both Scale-up and Scale-out rather than as a hard cutover.

AI Factories and Industry Adoption

A recurring theme throughout the keynote was NVIDIA's expansion beyond chips and systems into the infrastructure layer that surrounds them. Two areas reflect this most clearly: the tooling NVIDIA has built for designing and operating AI factories, and the breadth of industries now building on NVIDIA platforms.

NVIDIA DSX: Digital Twin Platform for AI Factories

As AI factories grow in scale and complexity, NVIDIA introduced DSX, an Omniverse-based digital twin platform built for designing, commissioning, and operating large-scale AI infrastructure. The core idea is that the various technology vendors involved in building a data center, cooling, power, networking, compute, need a shared environment to co-design before anything is physically built.

DSX covers the full facility lifecycle:

- Design and simulation: Thermal, electrical, mechanical, and network simulation using tools from partners including Siemens, Cadence, and Dassault Systemes

- Virtual commissioning: Facilities can be validated digitally through Procore before construction begins, reducing delays

- Live operations: Once a facility is running, the digital twin becomes an operational tool. AI agents monitor cooling, electrical systems, and grid signals in real time, feeding into NVIDIA's Max-Q system which dynamically adjusts compute workloads to maximize token throughput within power constraints

The underlying argument is that at gigawatt scale, inefficiencies in how a facility is designed and operated translate directly to lost token output and lost revenue. NVIDIA estimates there is roughly a factor of two in recoverable efficiency across a typical AI factory deployment.

Industry Verticals

Jensen covered the range of industries now building on NVIDIA infrastructure, with each relying on domain-specific Cuda-X libraries rather than general-purpose compute alone:

- Financial services: The largest represented industry at GTC this year. Algorithmic trading is shifting from classical quantitative methods to deep learning models that discover patterns across large datasets without human feature engineering

- Healthcare: Drug discovery, diagnostic AI agents, and physical AI robotics for clinical settings. NVIDIA cited this as going through its "ChatGPT moment" in terms of adoption velocity

- Automotive: Self-driving and robotaxi platforms, covered in more detail in the robotics section below

- Telecom: NVIDIA's Aerial platform enables AI-RAN, converting traditional base stations into AI inference infrastructure at the edge. Active partnerships with Nokia and T-Mobile

- Industrial and manufacturing: Simulation-driven robot deployment for factory automation, with integration across major industrial automation vendors

- Media and entertainment: Real-time AI for broadcast, live translation, and gaming, built on NVIDIA's Holoscan platform

- Retail and CPG: Supply chain optimization and AI agents for customer support across a $35 trillion industry

- Quantum computing: 35 companies at GTC building hybrid GPU-quantum systems using NVIDIA's cuQuantum platform

The breadth here reflects a consistent pattern in how NVIDIA approaches verticals. Rather than positioning GPUs as general-purpose accelerators, each domain gets purpose-built libraries, Cuda-X, that solve specific algorithmic problems in that field. Jensen described these libraries as the core of what differentiates NVIDIA's platform long term.

OpenClaw and the Agentic AI Framework

One of the more significant announcements at GTC was NVIDIA's support for OpenClaw, an open-source agentic AI framework that has seen unusually rapid adoption since its release. Jensen described it as the fastest adopted open-source project in history, surpassing Linux's 30-year install base within weeks.

What is OpenClaw?

OpenClaw functions as an operating system for AI agents. The components map closely to what a traditional OS provides:

- Resource management across file systems, tools, and language models

- Task scheduling and cron job execution

- Problem decomposition: breaking a prompt into sequential steps

- Sub-agent spawning for parallel workstreams

- Multimodal I/O including text, voice, and messaging

The practical result is that anyone can spin up a functioning AI agent by running a single command. The agent connects to a language model, receives a task, and executes it autonomously across tools and systems.

The capability that makes OpenClaw useful also creates security risk in a corporate environment. An agentic system with access to internal infrastructure can read sensitive data, execute code, and communicate externally. NVIDIA worked with OpenClaw's author to address this with an enterprise-ready reference design called NeMo Claw, which adds:

- A policy engine for governing agent behavior

- Network guardrails

- A privacy router to prevent unauthorized data exfiltration

- Integration hooks for existing enterprise SaaS policy systems

NeMo Claw is available to download and deploy and is designed to connect with the policy engines that enterprise software vendors already maintain.

Jensen's framing was that OpenClaw represents the same kind of inflection point for enterprise software that Linux, HTML, and Kubernetes each represented in prior computing eras. The argument is straightforward: just as every company eventually needed a Linux strategy, a web strategy, and a cloud-native strategy, every company now needs an agentic AI strategy.

Open Frontier Models for Every Industry

Alongside its hardware and infrastructure announcements, NVIDIA outlined its position as a contributor to open AI models. Rather than a single general-purpose model, NVIDIA is developing and releasing six domain-specific model families, each targeting a different field.

- Nemotron: Language models covering reasoning, visual understanding, retrieval-augmented generation, safety, and speech. Nemotron-3 Ultra is positioned as a base model for fine-tuning and sovereign AI deployments. Nemotron-4 is in active development

- Cosmos: World foundation models for physical AI, focused on generating and understanding synthetic environments for robotics and autonomous systems training

- Alpamayo: Described as the world's first reasoning-capable autonomous vehicle AI. Handles real-time decision making, narration of driving actions, and instruction following

- Groot: Foundation models for general-purpose humanoid and industrial robotics, covering whole-body control, manipulation, and policy generation

- BioNeMo: Open models for biology, chemistry, and molecular design, targeting drug discovery and life sciences research

- Earth2: Weather and climate forecasting models grounded in AI physics simulation

Each family is being actively maintained and updated. NVIDIA framed the ongoing investment in these models, rather than the models themselves, as the core value proposition for organizations building on them.

A thread running through this section of the keynote was sovereign AI, the ability for individual countries and organizations to build and run their own models rather than depending on a small number of large external providers. NVIDIA's open model initiative, combined with its on-premises infrastructure options, is positioned as the technical foundation for that capability.

Robotics and Physical AI

Jensen dedicated a significant portion of the keynote to robotics, framing it as the next major frontier for AI after language and reasoning models. The core argument is that the same capabilities that enabled agentic AI in software, perception, reasoning, and action, now need to be extended to systems operating in the physical world.

NVIDIA provides three distinct computers for robotics development:

- Training computer: For developing and training robot AI models at scale via DGX Rubin

- Simulation computer: For synthetic data generation using Isaac Lab, Newton physics simulation, and Cosmos world models via MGX and RTX PRO.

- Edge computer: The onboard compute that runs inside the robot itself via Jetson and Thor.

The simulation layer is particularly important because real-world data collection alone is insufficient to train robots across the full range of edge cases they will encounter in deployment.

AI Infrastructure Planning for the Inference Era

GTC 2026 reinforced that the shift from training to inference as the dominant AI workload has real implications for how infrastructure should be evaluated. Tokens per watt and token speed at a fixed power budget are increasingly the metrics that determine what a deployment can deliver. That framing should inform hardware decisions being made today.

NVIDIA Blackwell is the right platform to deploy now. It is in full production, the software stack is mature, and the ecosystem is well established. Vera Rubin is sampling well with broad availability ahead, and the Rubin Ultra to Feynman roadmap signals that NVIDIA's annual architecture cadence is not slowing down. Planning around that cadence, rather than waiting for it, is the more practical approach for most deployment timelines.

As an NVIDIA Elite Partner and solution integrator, Exxact Corporation works with organizations navigating these infrastructure decisions. From GPU servers and workstations to full rack-scale deployments, our team can help identify the right configuration for your workload and budget.