Introduction

Agentic AI pipelines are computational architectures where multiple specialized AI agents collaborate to complete complex tasks. Each agent in the pipeline handles a specific function, such as retrieving data, analyzing it, making decisions, or executing actions in coordination to complete the larger goal.

In a nutshell, on-premises deployment advantages:

- Performance: Agents operate close to enterprise data and compute resources

- Coordination: Lightweight APIs or message buses enable agent communication

- Speed: Faster decision-making through reduced latency

- Control: Stronger governance over proprietary data

- Cost predictability: Stable operating costs on owned infrastructure

- Independence: No dependencies on external cloud vendors

This article explores the advantages of on-premises GPU deployment, provides a high-level architectural view, explains orchestration and inter-agent communication, examines performance optimization patterns, evaluates economic considerations, and presents a practical starting strategy.

Understanding Agentic AI Pipelines

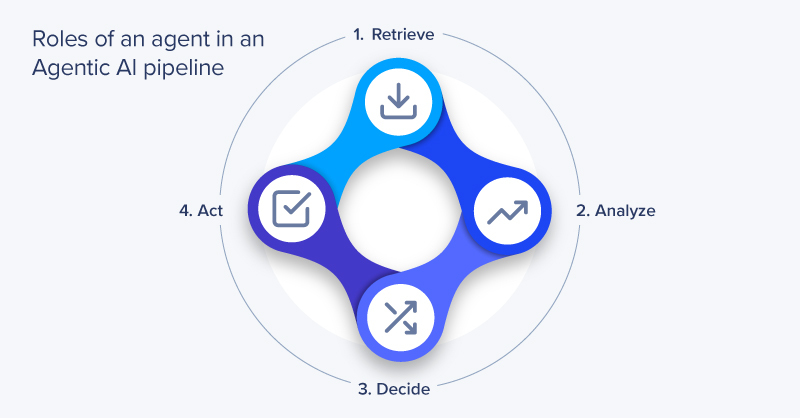

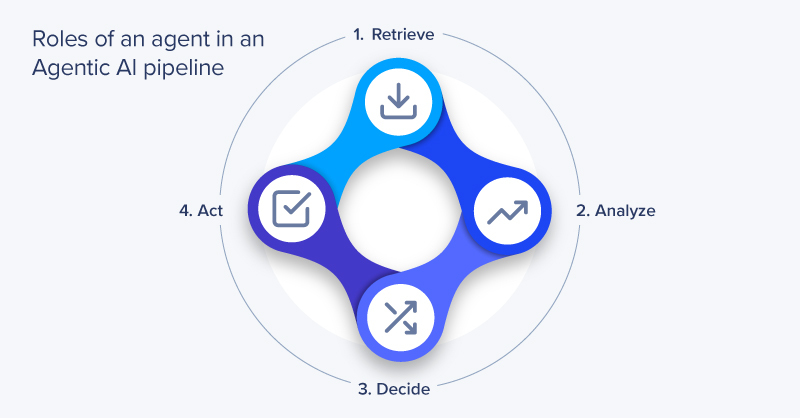

Agents within an agentic AI pipeline operate as distinct, specialized services, each designed to execute a specific step in a larger workflow.

- Retrieve: These agents access and extract relevant information from various sources, such as databases, data streams, or document repositories.

- Analyze: Once data is retrieved, analysis agents process and interpret it, identifying patterns, anomalies, or insights relevant to the task.

- Decide: Decision agents apply reasoning models, business logic, or learned policies to the analyzed data to determine the optimal course of action.

- DecideAct: Action agents execute the final step, which could involve triggering a business process, updating a system of record, sending a notification, or generating a report.

A multi-agent architecture consistently outperforms a single, monolithic AI model for complex enterprise workflows since models in each stage specialize in their task. It also enables parallel execution of tasks and allows for targeted scaling of individual components.

- Speed: Concurrent task execution across multiple agents significantly reduces the total time required to complete a complex process.

- Resilience: The failure of a single agent does not necessarily bring down the entire pipeline. The system can be designed to route around the failure or retry the specific step, increasing overall robustness.

- Auditability: Each agent's actions and decisions can be logged independently, creating clear and detailed traces for compliance, governance, and debugging purposes. AI systems are still black boxes, but errors can be attributed to specific models.

Agentic AI On-Prem Hardware: Why GPU Clusters Win

Deploying agentic AI pipelines on on-premises GPU clusters offers distinct advantages:

- Data Sovereignty: All processing occurs inside the enterprise perimeter, ensuring compliance with privacy, residency, and governance requirements.

- Cost Predictability: Fixed infrastructure costs become more cost-effective at steady utilization than fluctuating cloud GPU rates.

- Consistent Latency: Proximity to both data sources and end users reduces network delays, enabling real-time agent responsiveness.

- Custom Optimization: Enterprises can tune hardware, networking configurations, and policies to match workload profiles.

An on-premises, GPU-powered agentic AI system is typically structured into distinct layers, each with a specific function that contributes to the pipeline's overall operation.

| Layer | Function |

| GPU Servers | • Physical infrastructure consisting of servers equipped with high-performance GPUs that provide the necessary compute acceleration for agentic workloads. |

| Network Fabric | • High-throughput, low-latency east-west networking connects agents to each other, to storage, and to retrieval systems.

• Predictable tail latency matters for multi-hop workflows. |

| Data Plane (Storage + Shared State) | • Retrieval and action steps depend on a storage platform that houses model artifacts, vector embedding search, metadata, and more.

• Often the true bottleneck for throughput and responsiveness. |

| Orchestration Layer | • Responsible for scheduling agent workloads, allocating GPU resources, enforcing priorities, and managing the lifecycle of agent containers.

• Kubernetes, SLURM, and others. |

| Agent Layer | • Contains the individual, containerized AI agents.

• Each agent performs its specialized task—retrieval, analysis, decision, or action—as part of the broader workflow. |

| Operations + Governance | • Platform controls that make enterprise deployments operable and safe

• identity/access control, secrets management, audit logs, monitoring, and distributed tracing • debug latency and failures across hops. |

| Interfaces | • Provides APIs, dashboards, or integration points

• trigger workflows and interact with the agentic pipeline. |

This architecture is governed by a set of key principles designed to ensure scalability, security, and manageability:

- Containerization: Each agent is packaged as a container (e.g., Docker), ensuring portability, resource isolation, and consistent deployment across the cluster.

- Shared Policy Enforcement: Security and compliance policies are defined centrally and enforced by the orchestration layer, ensuring that all agents adhere to governance standards.

- Centralized Monitoring: A unified monitoring and logging system tracks the health, performance, and resource consumption of the entire cluster, providing visibility for operations teams.

- Co-location of Models and Datasets: Machine learning models and the datasets they require are stored close to the GPU servers to minimize data transfer overhead and reduce latency.

Fueling Innovation with an Exxact Multi-GPU Server

Training AI models on massive datasets can be accelerated exponentially with the right system. It's not just a high-performance computer, but a tool to propel and accelerate your research.

Configure NowWays to Scale On-Prem AI Performance

Here are several methods that are proven to significantly improve the throughput, latency, and efficiency of agentic pipelines in on-premises deployments.

- Co-Locate Agents with Data and Models: Placing compute close to storage reduces network hops and latency. For example, agents deployed on the same node as their feature store or model weights can avoid repeated transfers, improving throughput and lowering costs. This is especially important when working with large graph datasets or embeddings.

- Pre-Warm and Pin Hot Models: Frequently used models should be loaded into GPU memory ahead of time. Pre-warming avoids cold-start delays, and pinning ensures that critical models stay resident in memory rather than being offloaded when resources are contested. This allows agents to respond instantly to common queries.

- Batch Requests: Instead of processing one request per GPU call, agents can combine multiple small inference jobs into a single batch. Batching maximizes GPU utilization and reduces scheduling overhead, while still meeting latency targets if batch sizes are carefully tuned.

- Split GPU Resources: Not all agents need a full GPU. With GPU partitioning (e.g., CUDA MPS or MIG on NVIDIA GPUs), lightweight inference tasks can share a GPU slice, while heavier deep learning jobs run on dedicated GPUs. This prevents resource underutilization and improves overall efficiency.

- Asynchronous Messaging: To avoid idle GPU cycles, agents should communicate asynchronously. Rather than blocking on a single response, they can continue queuing or processing other tasks, keeping GPUs fully utilized even in multi-agent workflows.

Economics and ROI of Building Agentic AI Pipelines

The economic advantages of the on-prem model become clear when analyzing factors beyond the raw cost of compute. While cloud computing can be an easy barrier to entry with low startup costs and bursty workloads, ongoing agentic AI costs can snowball very fast. On-prem hardware should be prioritized at high utilization, with additional hybrid cloud for temporary spikes in computing demand.

| Metric | On-Prem GPU Deployment | Cloud On-Demand GPUs |

| Cost per GPU-Hour | • Becomes very low after the initial capital expenditure is amortized over the hardware's lifespan. | • Remains a variable and recurring operational expense, billed hourly. |

| Egress Fees | • None. Data movement between internal systems does not incur additional charges. | • Applicable and often significant for any data transferred out of the cloud provider's network. |

| Data Duplication Costs | • Minimal. Data can be kept in a centralized, high-performance storage system accessible by all agents. | • Can be higher, as data often needs to be replicated across multiple regions or services for performance or availability. |

| Latency Costs | • Negligible. Ultra-low latency enables real-time applications that may not be feasible over the public internet. | • Potentially higher due to network hops and variability, which can impact application performance and user experience. |

| Electrical Usage | • Known facility power and cooling costs can be modeled up front as part of TCO, then optimized over time through utilization improvements. | • Indirect and bundled into hourly rates, but still paid for. • Costs are less visible, and optimization levers are limited. |

Conclusion

On-premises GPU clusters offer enterprises a powerful foundation for deploying agentic AI pipelines with security, performance, and cost predictability. By maintaining control over infrastructure, data, and workload optimization, organizations can build systems tailored to their specific requirements while avoiding the unpredictable costs and latency challenges of cloud-only approaches.

The architectural patterns, communication strategies, and economic considerations outlined in this guide provide a roadmap for designing scalable, efficient agentic AI deployments. While cloud resources remain valuable for handling overflow capacity and experimentation, the core of a production agentic AI system benefits from the stability and control that on-premises infrastructure provides.

Ready to scale up your computing infrastructure for agentic AI? Exxact offers custom configurable solutions from individual compute nodes to full rack scale designs. Talk to an engineer today!

Fueling Innovation with an Exxact Designed Computing Cluster

Deploying full-scale AI models can be accelerated exponentially with the right computing infrastructure. Storage, head node, networking, compute - all components of your next Exxact cluster are configurable to your workload to drive and accelerate research and innovation.

Get a Quote Today

How Agentic AI Platforms Organize Their Hardware Infrastructure

Introduction

Agentic AI pipelines are computational architectures where multiple specialized AI agents collaborate to complete complex tasks. Each agent in the pipeline handles a specific function, such as retrieving data, analyzing it, making decisions, or executing actions in coordination to complete the larger goal.

In a nutshell, on-premises deployment advantages:

- Performance: Agents operate close to enterprise data and compute resources

- Coordination: Lightweight APIs or message buses enable agent communication

- Speed: Faster decision-making through reduced latency

- Control: Stronger governance over proprietary data

- Cost predictability: Stable operating costs on owned infrastructure

- Independence: No dependencies on external cloud vendors

This article explores the advantages of on-premises GPU deployment, provides a high-level architectural view, explains orchestration and inter-agent communication, examines performance optimization patterns, evaluates economic considerations, and presents a practical starting strategy.

Understanding Agentic AI Pipelines

Agents within an agentic AI pipeline operate as distinct, specialized services, each designed to execute a specific step in a larger workflow.

- Retrieve: These agents access and extract relevant information from various sources, such as databases, data streams, or document repositories.

- Analyze: Once data is retrieved, analysis agents process and interpret it, identifying patterns, anomalies, or insights relevant to the task.

- Decide: Decision agents apply reasoning models, business logic, or learned policies to the analyzed data to determine the optimal course of action.

- DecideAct: Action agents execute the final step, which could involve triggering a business process, updating a system of record, sending a notification, or generating a report.

A multi-agent architecture consistently outperforms a single, monolithic AI model for complex enterprise workflows since models in each stage specialize in their task. It also enables parallel execution of tasks and allows for targeted scaling of individual components.

- Speed: Concurrent task execution across multiple agents significantly reduces the total time required to complete a complex process.

- Resilience: The failure of a single agent does not necessarily bring down the entire pipeline. The system can be designed to route around the failure or retry the specific step, increasing overall robustness.

- Auditability: Each agent's actions and decisions can be logged independently, creating clear and detailed traces for compliance, governance, and debugging purposes. AI systems are still black boxes, but errors can be attributed to specific models.

Agentic AI On-Prem Hardware: Why GPU Clusters Win

Deploying agentic AI pipelines on on-premises GPU clusters offers distinct advantages:

- Data Sovereignty: All processing occurs inside the enterprise perimeter, ensuring compliance with privacy, residency, and governance requirements.

- Cost Predictability: Fixed infrastructure costs become more cost-effective at steady utilization than fluctuating cloud GPU rates.

- Consistent Latency: Proximity to both data sources and end users reduces network delays, enabling real-time agent responsiveness.

- Custom Optimization: Enterprises can tune hardware, networking configurations, and policies to match workload profiles.

An on-premises, GPU-powered agentic AI system is typically structured into distinct layers, each with a specific function that contributes to the pipeline's overall operation.

| Layer | Function |

| GPU Servers | • Physical infrastructure consisting of servers equipped with high-performance GPUs that provide the necessary compute acceleration for agentic workloads. |

| Network Fabric | • High-throughput, low-latency east-west networking connects agents to each other, to storage, and to retrieval systems.

• Predictable tail latency matters for multi-hop workflows. |

| Data Plane (Storage + Shared State) | • Retrieval and action steps depend on a storage platform that houses model artifacts, vector embedding search, metadata, and more.

• Often the true bottleneck for throughput and responsiveness. |

| Orchestration Layer | • Responsible for scheduling agent workloads, allocating GPU resources, enforcing priorities, and managing the lifecycle of agent containers.

• Kubernetes, SLURM, and others. |

| Agent Layer | • Contains the individual, containerized AI agents.

• Each agent performs its specialized task—retrieval, analysis, decision, or action—as part of the broader workflow. |

| Operations + Governance | • Platform controls that make enterprise deployments operable and safe

• identity/access control, secrets management, audit logs, monitoring, and distributed tracing • debug latency and failures across hops. |

| Interfaces | • Provides APIs, dashboards, or integration points

• trigger workflows and interact with the agentic pipeline. |

This architecture is governed by a set of key principles designed to ensure scalability, security, and manageability:

- Containerization: Each agent is packaged as a container (e.g., Docker), ensuring portability, resource isolation, and consistent deployment across the cluster.

- Shared Policy Enforcement: Security and compliance policies are defined centrally and enforced by the orchestration layer, ensuring that all agents adhere to governance standards.

- Centralized Monitoring: A unified monitoring and logging system tracks the health, performance, and resource consumption of the entire cluster, providing visibility for operations teams.

- Co-location of Models and Datasets: Machine learning models and the datasets they require are stored close to the GPU servers to minimize data transfer overhead and reduce latency.

Fueling Innovation with an Exxact Multi-GPU Server

Training AI models on massive datasets can be accelerated exponentially with the right system. It's not just a high-performance computer, but a tool to propel and accelerate your research.

Configure NowInter-Agent Communication and Shared Context

For agentic pipelines, coordination overhead (network hops, serialization, and retries) can become a bigger bottleneck than raw GPU throughput. The communication and context layer should be designed for low latency, horizontal scale, and debuggability.

- Choose the right communication model

- Request/response (REST, gRPC) works well when an agent needs an immediate answer to proceed.

- Async/event messaging (Kafka, message bus) is ideal when steps can be decoupled and queued, improving throughput and resilience during spikes. Kafka is a common fit for event-driven workflows and replayable pipelines.

- Minimize shared context “blast radius” to reduce bandwidth, keep memory pressure down, and help keep clusters efficient at scale. Avoid passing large payloads between agents. Prefer:

- Share pointers, not datasets: IDs, URIs, hashes, or embeddings instead of raw documents.

- Fetch on demand: downstream agents retrieve the full data only when required.

- Build reliability via timeouts, bounded retries with backoff, and circuit breakers. These prevent one failing agent from stalling the whole pipeline.

- Build visibility via distributed tracing (trace IDs across hops), structured logs, and latency and error metrics. These make it possible to pinpoint whether bottlenecks are in the network, the orchestration layer, or GPU inference.

Ways to Scale On-Prem AI Performance

Here are several methods that are proven to significantly improve the throughput, latency, and efficiency of agentic pipelines in on-premises deployments.

- Co-Locate Agents with Data and Models: Placing compute close to storage reduces network hops and latency. For example, agents deployed on the same node as their feature store or model weights can avoid repeated transfers, improving throughput and lowering costs. This is especially important when working with large graph datasets or embeddings.

- Pre-Warm and Pin Hot Models: Frequently used models should be loaded into GPU memory ahead of time. Pre-warming avoids cold-start delays, and pinning ensures that critical models stay resident in memory rather than being offloaded when resources are contested. This allows agents to respond instantly to common queries.

- Batch Requests: Instead of processing one request per GPU call, agents can combine multiple small inference jobs into a single batch. Batching maximizes GPU utilization and reduces scheduling overhead, while still meeting latency targets if batch sizes are carefully tuned.

- Split GPU Resources: Not all agents need a full GPU. With GPU partitioning (e.g., CUDA MPS or MIG on NVIDIA GPUs), lightweight inference tasks can share a GPU slice, while heavier deep learning jobs run on dedicated GPUs. This prevents resource underutilization and improves overall efficiency.

- Asynchronous Messaging: To avoid idle GPU cycles, agents should communicate asynchronously. Rather than blocking on a single response, they can continue queuing or processing other tasks, keeping GPUs fully utilized even in multi-agent workflows.

Economics and ROI of Building Agentic AI Pipelines

The economic advantages of the on-prem model become clear when analyzing factors beyond the raw cost of compute. While cloud computing can be an easy barrier to entry with low startup costs and bursty workloads, ongoing agentic AI costs can snowball very fast. On-prem hardware should be prioritized at high utilization, with additional hybrid cloud for temporary spikes in computing demand.

| Metric | On-Prem GPU Deployment | Cloud On-Demand GPUs |

| Cost per GPU-Hour | • Becomes very low after the initial capital expenditure is amortized over the hardware's lifespan. | • Remains a variable and recurring operational expense, billed hourly. |

| Egress Fees | • None. Data movement between internal systems does not incur additional charges. | • Applicable and often significant for any data transferred out of the cloud provider's network. |

| Data Duplication Costs | • Minimal. Data can be kept in a centralized, high-performance storage system accessible by all agents. | • Can be higher, as data often needs to be replicated across multiple regions or services for performance or availability. |

| Latency Costs | • Negligible. Ultra-low latency enables real-time applications that may not be feasible over the public internet. | • Potentially higher due to network hops and variability, which can impact application performance and user experience. |

| Electrical Usage | • Known facility power and cooling costs can be modeled up front as part of TCO, then optimized over time through utilization improvements. | • Indirect and bundled into hourly rates, but still paid for. • Costs are less visible, and optimization levers are limited. |

Conclusion

On-premises GPU clusters offer enterprises a powerful foundation for deploying agentic AI pipelines with security, performance, and cost predictability. By maintaining control over infrastructure, data, and workload optimization, organizations can build systems tailored to their specific requirements while avoiding the unpredictable costs and latency challenges of cloud-only approaches.

The architectural patterns, communication strategies, and economic considerations outlined in this guide provide a roadmap for designing scalable, efficient agentic AI deployments. While cloud resources remain valuable for handling overflow capacity and experimentation, the core of a production agentic AI system benefits from the stability and control that on-premises infrastructure provides.

Ready to scale up your computing infrastructure for agentic AI? Exxact offers custom configurable solutions from individual compute nodes to full rack scale designs. Talk to an engineer today!

Fueling Innovation with an Exxact Designed Computing Cluster

Deploying full-scale AI models can be accelerated exponentially with the right computing infrastructure. Storage, head node, networking, compute - all components of your next Exxact cluster are configurable to your workload to drive and accelerate research and innovation.

Get a Quote Today